Is using AI in a 2026 job interview ethical? An honest read of company policies

There are three kinds of companies: those that explicitly ban AI in interviews, those that stay silent, and those that openly say 'come with the tools you actually use at work.' We map who's who, and explain why the historical parallel here is calculators in math exams, not copying off your neighbor.

Is using AI in a 2026 job interview ethical? An honest read of company policies

Every month at our support inbox we get the same question. "Will I get fired if they find out?" Each time we answer as honestly as we can: depends on the company, depends on the country, depends on the year.

This article isn't a "everyone use AI in interviews" manifesto. It also isn't a "AI in interviews is cheating" sermon. It's a pragmatic read of what's actually happening in tech hiring in 2026. Without moralizing, without excuses — we make a product in this space, and we're obligated to take a clear public position.

Three types of companies, three different rules

Look at hiring policies of major employers and you don't see a binary "yes/no." You see three distinct camps.

Group 1 — explicit ban. Most large US tech companies (Google, Meta, Stripe, Airbnb) have added a clause about AI assistants to their interview rules. Typical wording: "use of AI tools, copilots, or real-time assistants during technical rounds is considered a violation and grounds for disqualification." There have been high-profile cases in the US where offers were rescinded post-signing — the company found out and acted retroactively.

Group 2 — silence. Most Russian-speaking corporates (Yandex, Avito, Sber, T-Bank, VK, Ozon) as of 2026 don't mention AI in their public interview rules at all. Neither permitted nor forbidden. Legal default in this state — what isn't banned isn't a violation. In practice it's a grey zone: one interviewer stays quiet, another shrugs, a third tells HR after. Behavior depends on the human, not the policy.

Group 3 — open permission. A minority, but it exists. Companies that say things like "come with the tools you actually work with." IDE, search, docs, sometimes AI assistants — explicitly allowed. Logic: "you're going to write code in Cursor with Claude on the job, we want to see how you actually do it, not how you write on a whiteboard." A chunk of YC-backed startups and product-led teams are already there.

So if you have a Stripe interview — AI is banned, the risk is real. If it's Avito or any Russian-speaking mid-market — nobody banned it. If it's a forward-thinking product startup — they might ask you to bring Cursor.

The hypocrisy nobody talks about

Companies already use AI on you while banning you from using AI. This asymmetry rarely makes it into the discussion.

What companies actually do:

- Resume screening with AI models. Your file passes through an ATS that algorithmically marks "relevant/irrelevant." Crystallinity, Avito's resume filter, Workday and Greenhouse — they all do it. Some resumes are filtered out before a human recruiter ever opens them.

- Anti-cheat on take-home tasks. Codility and HackerRank have used ML models for years to catch candidates who opened ChatGPT in another window. The algorithm watches typing speed, edit patterns, and cursor returns to the solution.

- AI-conducted first-round interviews. Products like Workday HiredScore conduct the first round automatically — ask questions, evaluate answers, send the recruiter a summary.

- AI-evaluated soft skills. Analysis of call recordings for "enthusiasm," "confidence," and "culture fit" is a real feature in several HR-tech products.

Result: your resume is selected by an algorithm, your take-home is checked by an algorithm, your first-round interview is conducted by an algorithm, your voice is analyzed by an algorithm. But if you open ChatGPT in round two — that's cheating.

This asymmetry doesn't dissolve the ethical question for the candidate, but it shifts the discussion. Not "AI in interview = cheating," but "who's allowed to use AI in which step, and why."

Calculators in math exams as a historical parallel

In the 1970s, basic pocket calculators had just hit US classrooms. Most teachers considered them "cheating tools" — students wouldn't learn to do arithmetic mentally. They were banned in exams, confiscated in class.

By the 1990s, graphing calculators (Texas Instruments TI-83) were mandatory equipment for AP math exams in the US. You physically couldn't take the test without one. The same thing that was "cheating" twenty years prior had become embedded in the standard.

This trajectory repeats. Internet search during the workday in 2005 — "a real professional should know it by heart." By the 2010s — standard practice. Stack Overflow in 2008 — "don't copy code, think for yourself." By 2015 — required component of professional work. IDE autocomplete in 1995 — "real programmers write in vi without hints." Today nobody seriously works without LSP/IntelliSense.

AI in interviews is right where Google search was in 2005-2008. Part of the market sees it as cheating, part as normal. In 5-10 years it'll most likely become as standard a part of interviews as bringing a laptop with an IDE.

Same cycle, same age, same reaction from the conservative tail of the industry. History has already answered this one.

What changes when AI becomes normal

Big companies are already adapting to the new reality — not with bans, but by changing interview formats. What we see in 2026:

Behavioral rounds gain weight. "Tell me about a conflict with your tech lead" used to be a formality. Now it's becoming the central round. AI doesn't know your personal experience. STAR framework, specific stories, personal reflection — none of that can be generated.

Whiteboard and pen-and-paper rounds are coming back. Some Google and Meta on-site rounds are back to whiteboards. Not because the whiteboard is better — because AI can't yet read through a webcam and prompt in real time.

Onsite or paid work-trial. Some companies remove the question entirely: you come into the office for a day, work in their infrastructure with their engineers, and they watch you solve a real problem. AI is welcome at that point — but it's also the most honest read of what you can actually do.

Week-long paid take-homes with defense. A long format with a real task and a follow-up presentation is harder to "cheat." If the task takes 30 hours and you defend your architecture decisions, AI helps in some places and hurts in others, and the final result speaks.

All these formats are reasonable, and most of them are more honest than a 45-minute whiteboard puzzle. So AI becoming a tool is pushing the industry toward more truthful hiring formats.

The real risk of using AI in an interview

The most common failure mode isn't "you got caught by a detector," it's "you couldn't deliver the hint as your own."

Here's what happens to candidates who overestimate the helper:

- Reading off the screen verbatim. Tone shifts to "reading," pauses between phrases become unnaturally even. An experienced interviewer catches it inside a minute. A good AI helper writes you a structured outline, not a script to read.

- Can't answer follow-up questions. Interviewer asks "why did you pick this approach" — and you didn't pick, you read. AI pulled a templated solution, the context never built up in your head.

- Inconsistencies with prior answers. Round one you said you'd never used Kubernetes. Round two AI gave you an answer assuming six months of k8s experience. The contradiction surfaces in round three.

- Suspiciously perfect answer to a basic question. A senior candidate giving an encyclopedia-precise answer to fundamentals — sometimes legit, sometimes a flag. Depends on context.

The real 2026 skill isn't "use AI in interviews," it's "use AI so it works." Hint → understanding → your own phrasing → answer. That takes time and practice. Anyone thinking AI "will solve it for me" fails more, not less.

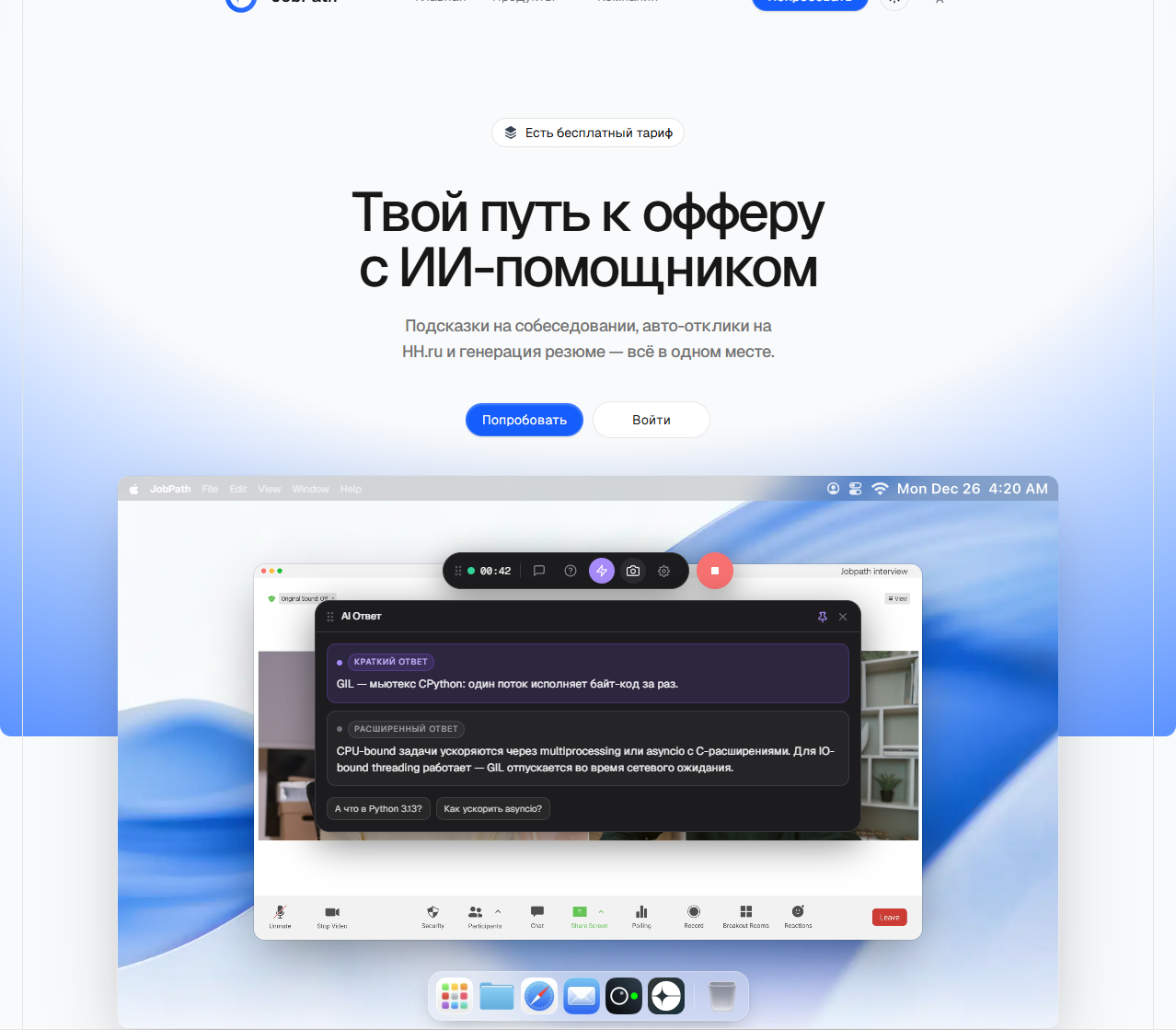

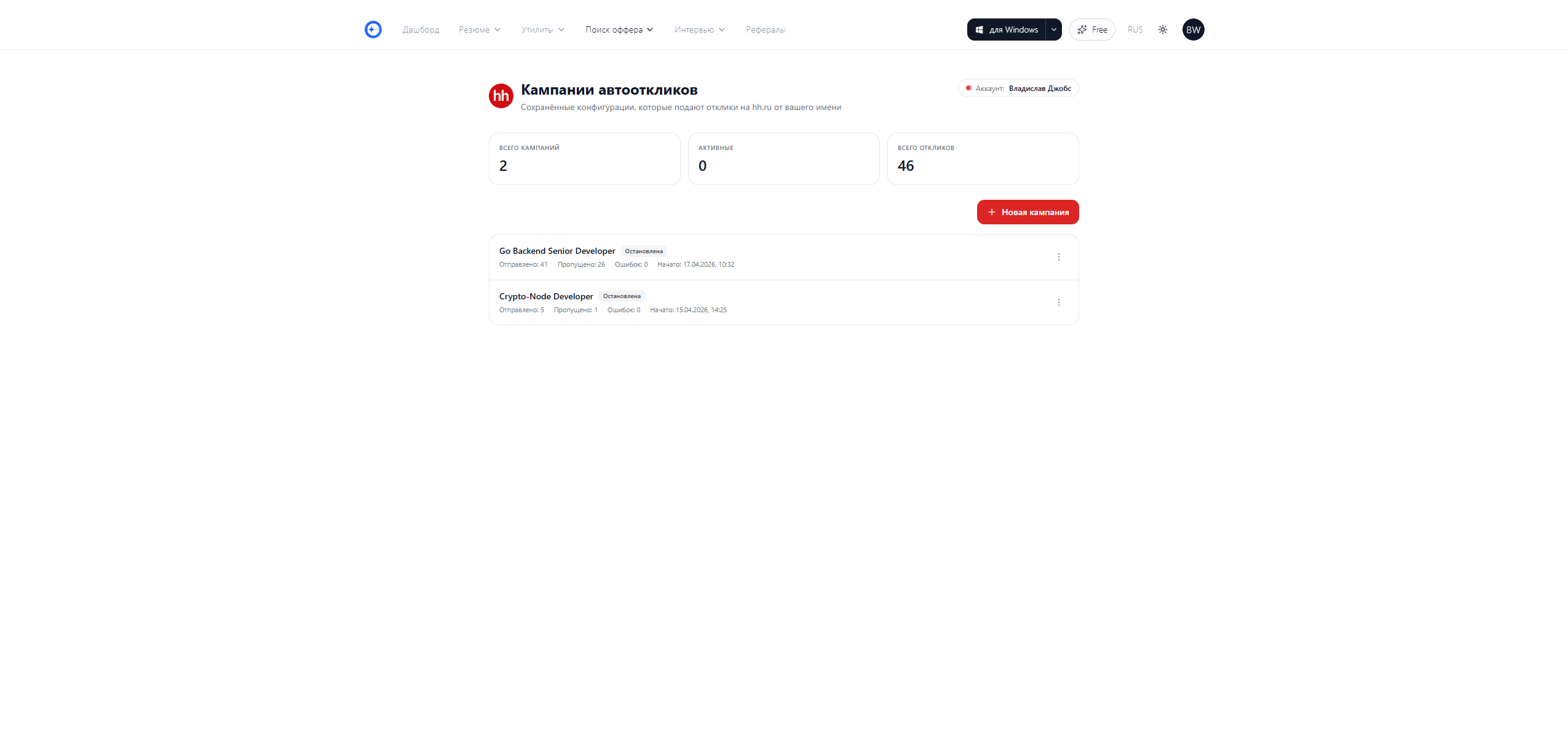

Where JobPath stands

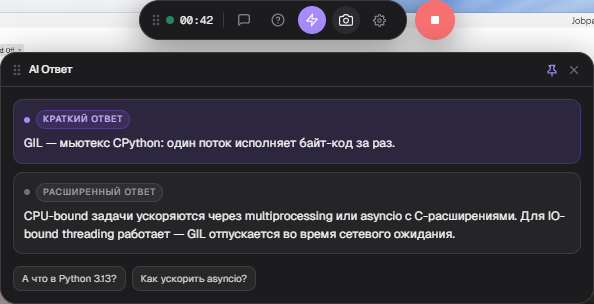

Plainly. We make a tool in the grey zone. It's legally available, but the company on the other side of the call may not approve — that's part of the product reality, and we say so openly.

What we do deliberately:

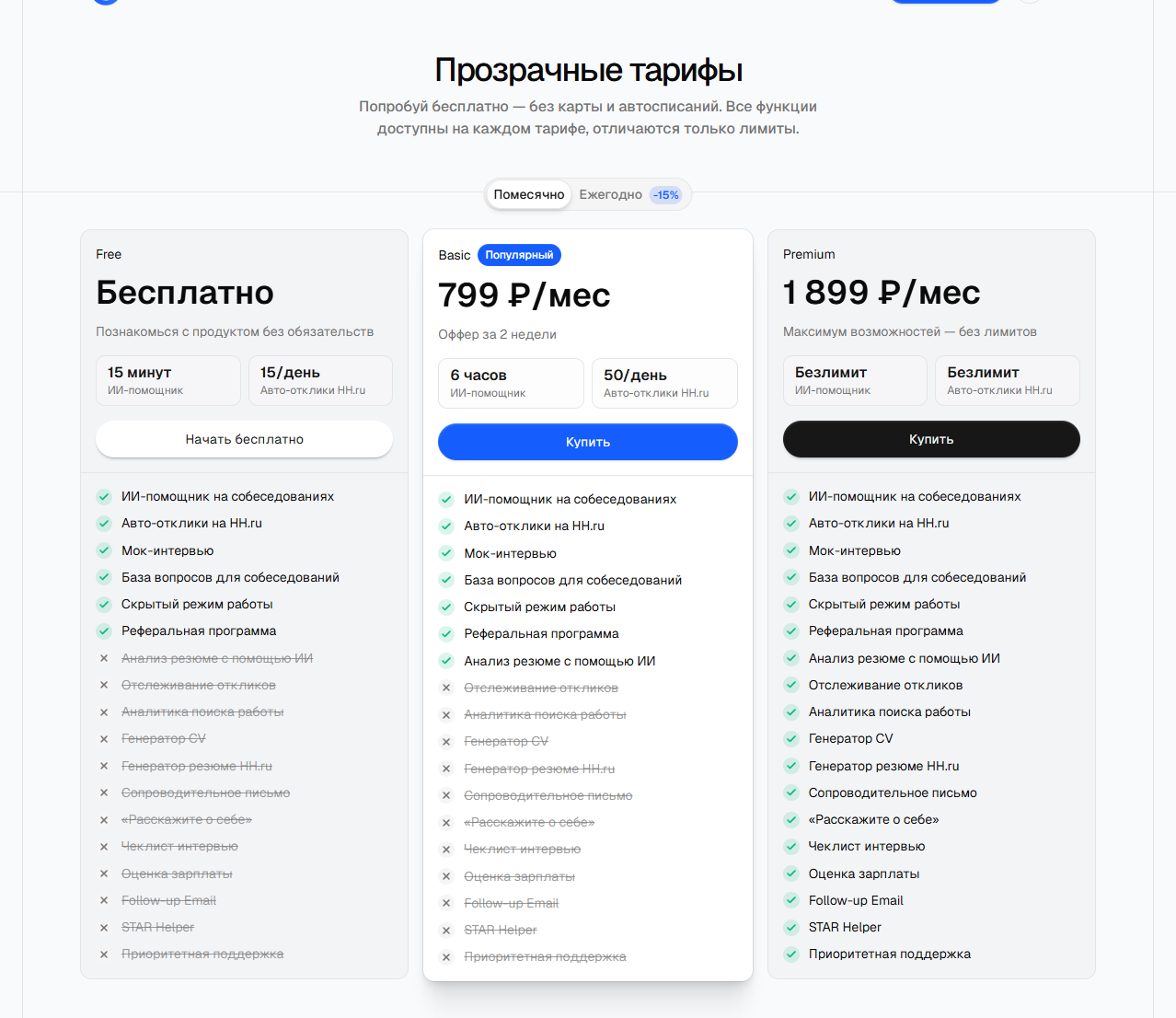

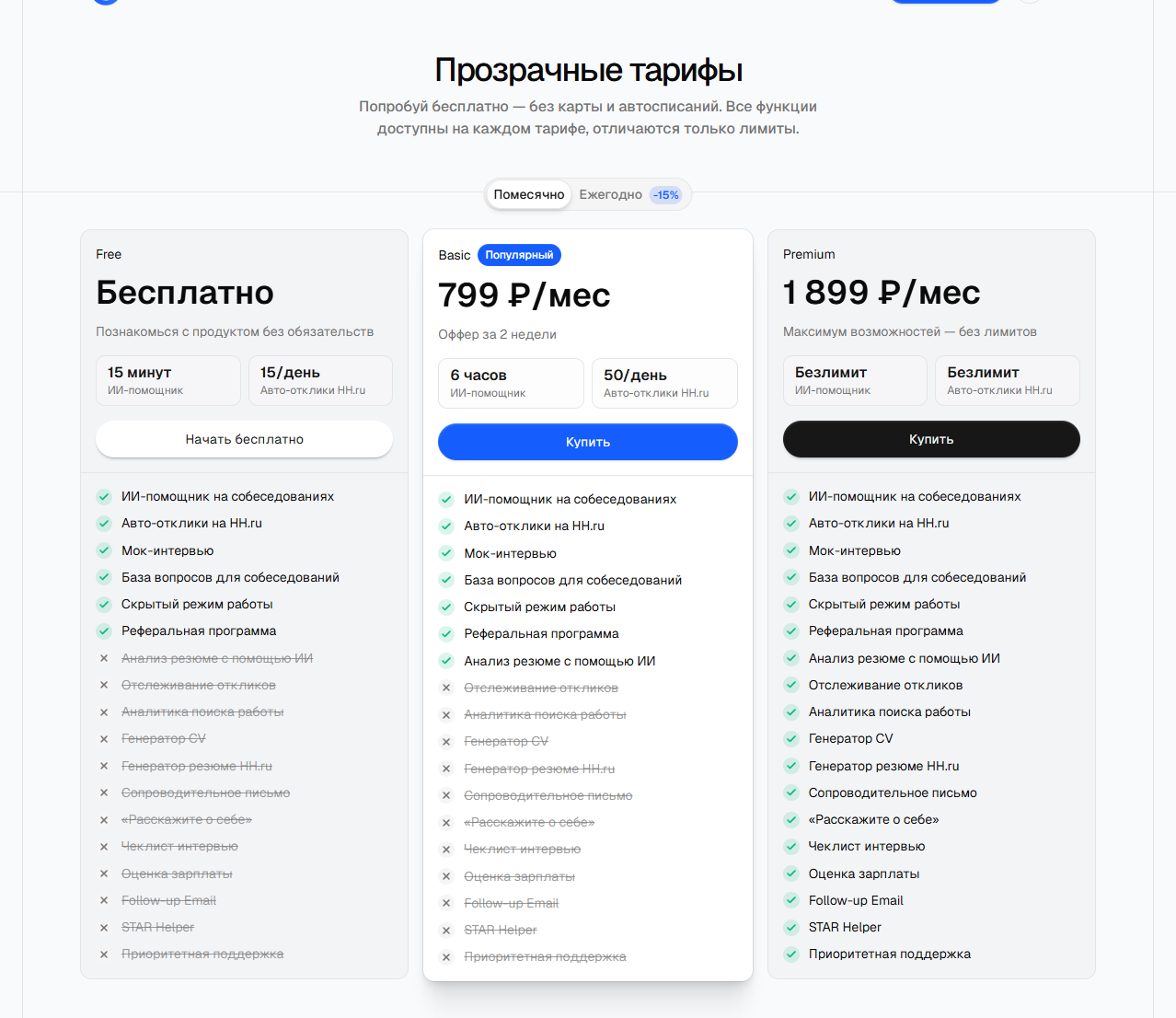

- Publish prices in full. Free 0 RUB, Basic 799 RUB, Premium 1899 RUB. No credit-based opaque pricing.

- Acknowledge the ethical question in our public copy. The landing FAQ states clearly that not all companies allow this. The checkout page does too.

- Don't optimize for the anti-detect arms race. We do OS-level stealth — the window is filtered out of the screen-share API. We don't do "phantom windows to fool task manager," as some competitors do. That's a different product philosophy.

We're a product for the grey zone, not for deceiving an explicit ban. If the company explicitly forbids AI in interviews, that's your decision, and the risks are yours.

The final test

Here's a question to check yourself with.

If the interviewer in the middle of the call asked you "are you using an AI assistant?" — what's your honest answer?

- "Yes, like Stack Overflow on the job" — you're fine. Not every company will agree, but the position is ethically consistent.

- "Using it like a calculator for an unfamiliar tech" — same thing, working stance.

- A lie — that's where the ethical problem lives. And it's not about AI, it's about lying to someone to get a job.

AI in interviews is a tool. The ethical responsibility for using it sits with the user, not with the tool. The calculator isn't responsible for being used to cheat. Stack Overflow isn't responsible for code being copied wholesale. JobPath isn't responsible for someone deciding to abuse the advantage we offer.

The decision is yours. We can only build a product that doesn't make the moral call for the user. That's what we do.

If you want to try it — the Free plan gives 15 minutes a month, no credit card. Hit jobpath.world, download the desktop client, run it on a practice call with a friend. Decide for yourself.